Wearables, IoT, and offline inference

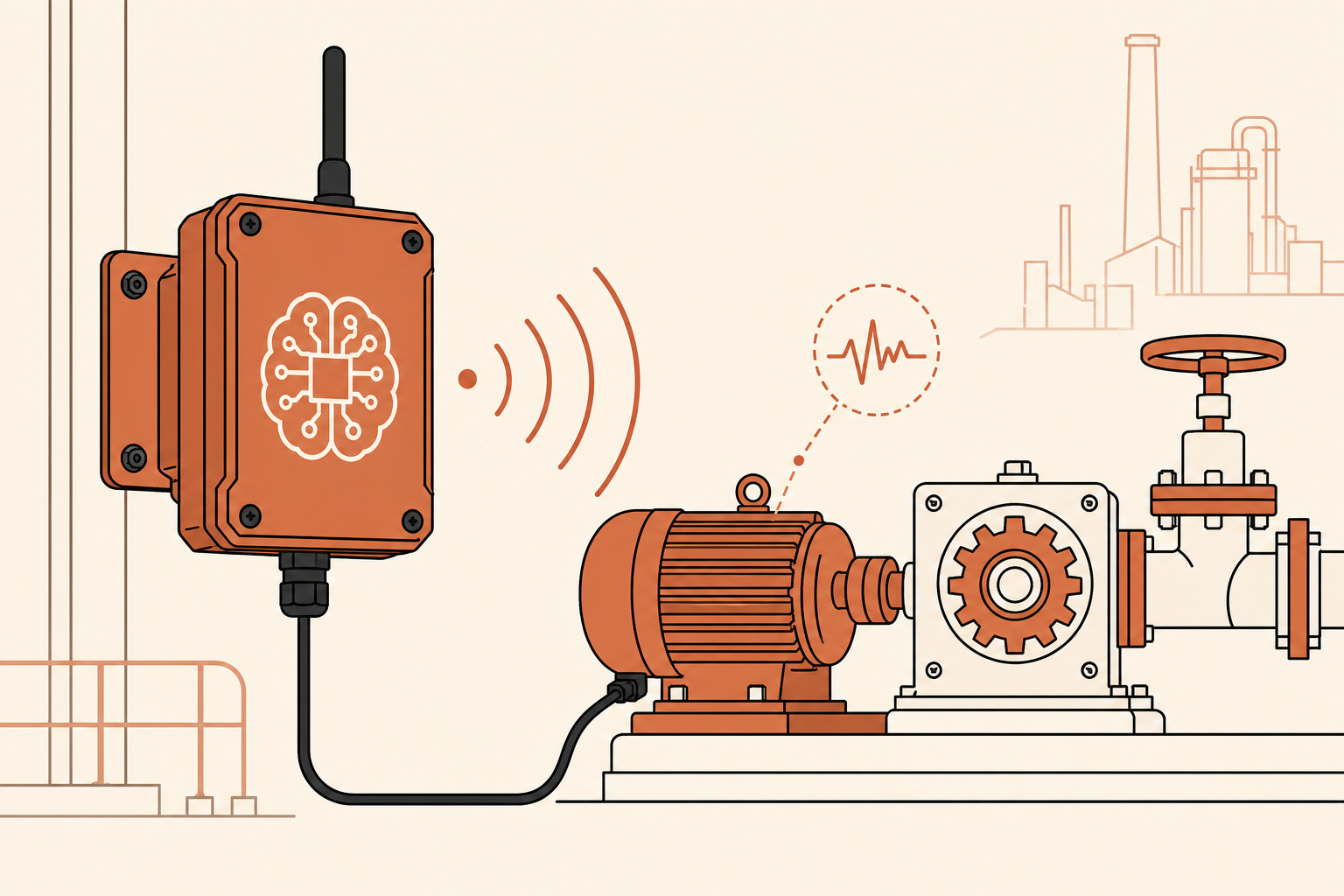

A meaningful share of enterprise value lives in places the cloud can't reach reliably - factory floors, vehicles in transit, oil and gas sites, hospitals during a network event, wearables on a patient. Edge AI is what keeps the system useful when the network is unreliable, the latency budget is in milliseconds, or the data is too sensitive to leave the device. None of that is solved by sending everything back to a cloud endpoint.

The technical work is interesting; the organizational work is harder. Edge programs sit between the cloud-trained ML team and the embedded firmware team, and those two organizations rarely operate on the same cadence, the same review process, or the same definition of "ready." We have spent years working at both ends - distilling models that run on constrained hardware in real time, and shipping firmware that the field engineers can actually maintain.

For a CTO, the right test of an edge program is what happens when something goes wrong in the field. Can the device report meaningfully? Does the offline-first state reconcile cleanly when it reconnects? Is there a documented degradation path when the model isn't confident? We build for those answers from the first prototype, not as a hardening pass before launch.

Three ways this shows up in production.

On-device inference

Distilled models that run on constrained hardware in real time.

Hardware partnerships

We work with the firmware team. Not over the wall - at the bench.

Sync when reconnected

Offline-first state, reconciled on reconnect with conflict policy.