We believe you can build with AI agents quickly, without losing control. We believe in organizations that operate by method, not in organizations that depend on a star developer who knows how to phrase a good prompt. We believe real productivity comes from measurement, not from declaration.

The failure mode is rarely the model

Across roughly forty production deployments we have watched the same arc. An AI-assisted team ships fast for the first ten pull requests. By PR fifty, velocity has collapsed: inconsistent patterns, half-finished refactors, tests that pass but mean nothing, and a growing pile of code that nobody on the team fully understands. The next engineer takes three weeks to onboard instead of three days.

The model is fine. What collapses is the organizational scaffolding around it. Specifications live in someone's head. Policy is enforced by personality. Reviews are skipped under deadline. Audits exist, but only after something breaks. The pattern is not a tooling problem. It is the absence of method - and at AI-assisted velocity, the absence of method becomes catastrophic in months that would otherwise have taken years.

What we value

A written specification - over a verbal prompt. Codified policy - over personal preference. A scoped agent - over an agent with imagination. Knowledge shared across the organization - over knowledge locked in one developer's head. Automated quality gates - over good intentions. Human review calibrated to risk - over review that gets skipped under deadline. Audit by default - over audit after something breaks.

There is value in the right side of each pair. We value the left side more.

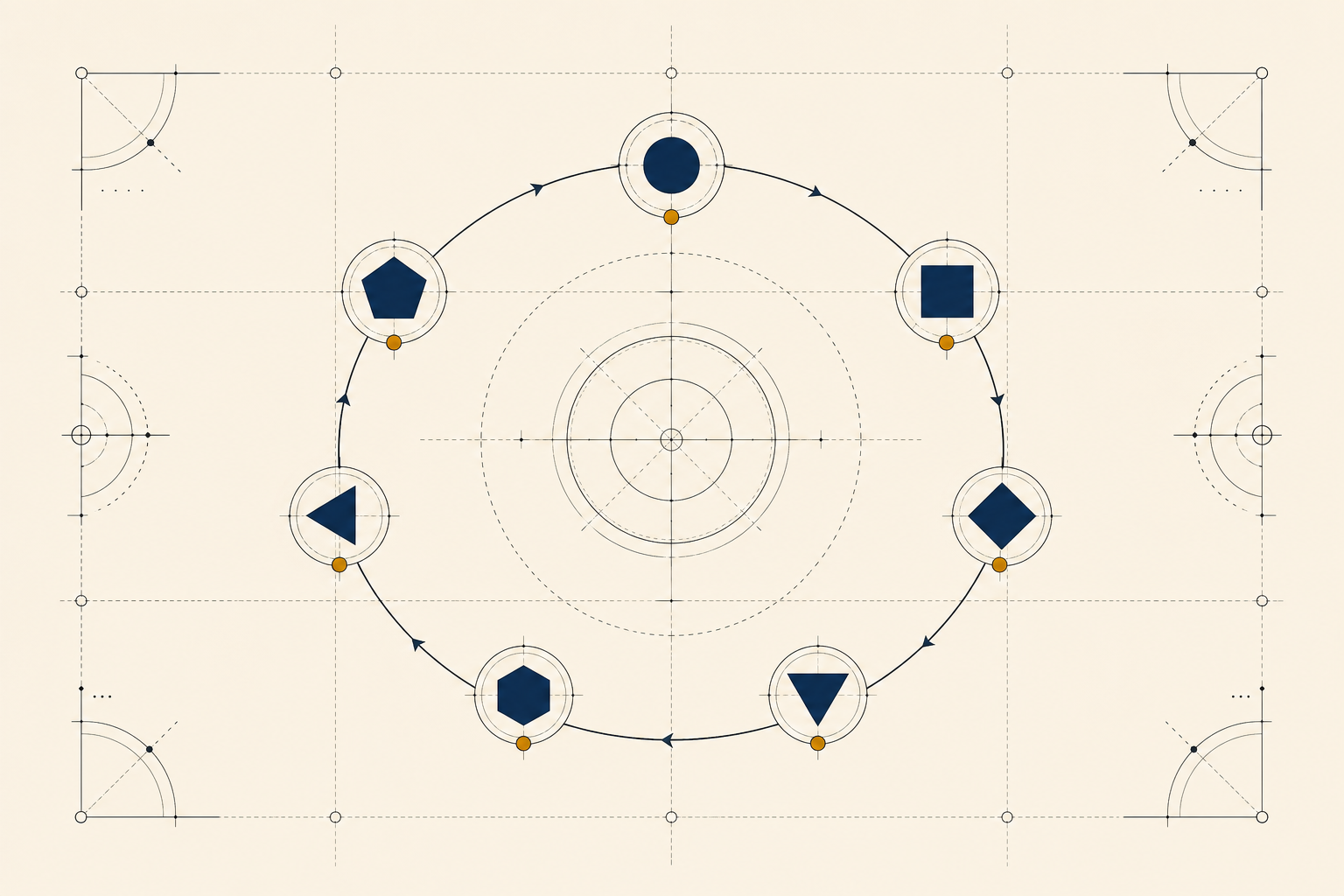

Seven disciplines. One method. SPECTRA.